RAID, performance tests

| Line 1: | Line 1: | ||

| − | == | + | = Overview of Array Types = |

| + | |||

| + | == RAID1: Simple Mirroring == | ||

| + | While RAID1 offers slow write speeds and even some read performance disadvantages when compared to array types such as RAID3, RAID5, or RAID0+1, it does offer one potentially gigantic advantage that the more complex arrays can't: simplicity and survivability. If you lose a RAID controller, lose one or more drives, even forget how RAID works or what it means, any one single surviving member drive of a RAID1 array may be hooked up to a normal drive controller and operated as a standalone drive. | ||

| + | |||

| + | RAID1 is the performance leader when it comes to massively parallel read processes, but offers little or no read performance benefit to a lightly loaded server or workstation which is usually only serving one request at a time. | ||

| + | |||

| + | |||

| + | == RAID0+1: The Mirrored Stripe Set == | ||

| + | RAID0, unlike RAID1, offers drastically improved write performance and also drastically improved single-read performance. However, RAID0 does not offer parity, meaning that loss of a single member drive means irrevocable loss of all data on the array. RAID0+1 is an attempt to offer the best of both worlds, by creating a stripe set of mirrored pairs - offering most of the advantages of both protocols with few of the flaws of either. You must lose at least two member drives to cause dataloss in a RAID0+1 array, and further the two drives must be both members of a single mirrored pair, making this an extremely survivable array type in regard to member failure. | ||

| + | |||

| + | While RAID0+1 does offer high performance and high survivability, it also requires a lot of drives for a relatively small array, and still can't offer the "plug any single member in as a singleton and go" safety blanket of pure RAID1. Like the other striping array types, if you lose the RAID controller itself you may have a scary nail-biter of a time trying to recover the array on replacement hardware. | ||

| + | |||

| + | |||

| + | == RAID3: Stripe Set with Parity Member == | ||

| + | RAID3 is a RAID0 array with an extra member which gets a parity block written to it for each block written to the data members. RAID3 offers significant performance benefits across the board, from write performance to single-read performance to multi-read performance, although not as large a multi-read boost as proper RAID1 implementations do. The parity member also allows you to lose any single drive without losing the array, but loss of ''any'' second member is sufficient to kill the array. | ||

| + | |||

| + | Use of the parity member in read operations is optional with FreeBSD's RAID3 implementation ([[graid3]]), but testing seems to show round-robin parity member reads offering little to no performance benefit with potentially severe performance decreases, so it doesn't seem to be a good idea for most settings. | ||

| + | |||

| + | '''Graid3''' only allows array sizes of 2^n-1 - ie 3, 5, 9, and so on. This may be a significant handicap to those trying to squeeze the absolute most out of an array without breaking the bank. | ||

| + | |||

| + | RAID3 is extremely rare outside the FreeBSD world - I have neither seen nor heard of it in actual use other than with '''graid3''' under FreeBSD. | ||

| + | |||

| + | |||

| + | == RAID5: Stripe Set with Distributed Parity == | ||

| + | RAID5 is extremely similar to RAID1, except that parity blocks are distributed among all member drives - where a RAID3 array with 5 member drives will write 4 blocks to members 1,2,3,4 and then a parity block to member 5, a RAID5 array with 5 member drives will instead write ''five'' blocks to members 1,2,3,4,5 and then a parity block to member 1 - and on the next cycle, will write five data blocks to 2,3,4,5,1 and then a parity block to member 2. | ||

| + | |||

| + | RAID5 and RAID3 are obviously extremely similar. Although RAID5 has dominated the industry as a whole for decades, there has been some controversy in recent years over their relative performance levels. We will test both types in this article. | ||

| + | |||

| + | FreeBSD does not currently support RAID5 at all. Even inexpensive "hardware" controllers such as the Nvidia onboard types (which use the CPU to XOR data blocks to generate parity and vice versa) are unsupported. | ||

| + | |||

| + | |||

| + | = RAID1 Disk Performance = | ||

[[image:gmirror-performance.png | Click for raw data and test equipment information]] | [[image:gmirror-performance.png | Click for raw data and test equipment information]] | ||

| Line 15: | Line 47: | ||

| − | = | + | = Complex Array Performance = |

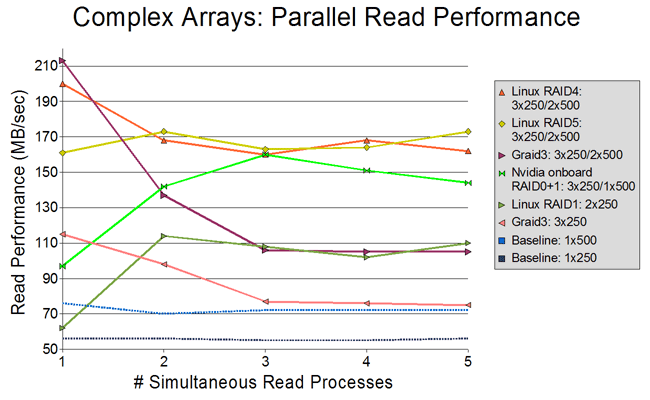

[[image:graid-performance.png | Click for raw data and test equipment information]] | [[image:graid-performance.png | Click for raw data and test equipment information]] | ||

| − | [[Graid3]] is doing noticeably better than [[ | + | [[Graid3]] is doing noticeably better than [[gmirror]]. The 5-drive Graid3 implementation handily outperformed the 2-drive mirror across the board, and the 3-drive Graid3 implementation performed somewhat slower than the Linux or Nvidia 2-drive RAID1 arrays in the 2- and 3-process tests and significantly slower in the 4-process and 5-process tests, but nearly doubled their single-process performance. |

| + | |||

| + | Nvidia's RAID0+1 presents a compelling challenge, clearly outperforming the 5-drive '''graid3''' array in the 3, 4, and 5 process tests - but equally significantly, it's roughly on par for the 2-process test and ''drastically'' slower on the single-process test, even getting outperformed by the 3-drive RAID3 array. We'll go into what that means for the real world more in the overall high performance section next. | ||

| − | Only results for | + | Only results for [[graid3]]'s default configuration are shown here, because the '''-r''' configuration (always use the parity member during reads) performed slightly to significantly poorer in all but the 5-process test, in which it performed only very slightly better. It is possible that a more massively parallel test (or a test of a much less contiguous filesystem) would show some advantage to '''-r''', but for these tests, no advantage is apparent. Raw data is available on the image page itself. |

Revision as of 00:07, 28 December 2007

Contents |

Overview of Array Types

RAID1: Simple Mirroring

While RAID1 offers slow write speeds and even some read performance disadvantages when compared to array types such as RAID3, RAID5, or RAID0+1, it does offer one potentially gigantic advantage that the more complex arrays can't: simplicity and survivability. If you lose a RAID controller, lose one or more drives, even forget how RAID works or what it means, any one single surviving member drive of a RAID1 array may be hooked up to a normal drive controller and operated as a standalone drive.

RAID1 is the performance leader when it comes to massively parallel read processes, but offers little or no read performance benefit to a lightly loaded server or workstation which is usually only serving one request at a time.

RAID0+1: The Mirrored Stripe Set

RAID0, unlike RAID1, offers drastically improved write performance and also drastically improved single-read performance. However, RAID0 does not offer parity, meaning that loss of a single member drive means irrevocable loss of all data on the array. RAID0+1 is an attempt to offer the best of both worlds, by creating a stripe set of mirrored pairs - offering most of the advantages of both protocols with few of the flaws of either. You must lose at least two member drives to cause dataloss in a RAID0+1 array, and further the two drives must be both members of a single mirrored pair, making this an extremely survivable array type in regard to member failure.

While RAID0+1 does offer high performance and high survivability, it also requires a lot of drives for a relatively small array, and still can't offer the "plug any single member in as a singleton and go" safety blanket of pure RAID1. Like the other striping array types, if you lose the RAID controller itself you may have a scary nail-biter of a time trying to recover the array on replacement hardware.

RAID3: Stripe Set with Parity Member

RAID3 is a RAID0 array with an extra member which gets a parity block written to it for each block written to the data members. RAID3 offers significant performance benefits across the board, from write performance to single-read performance to multi-read performance, although not as large a multi-read boost as proper RAID1 implementations do. The parity member also allows you to lose any single drive without losing the array, but loss of any second member is sufficient to kill the array.

Use of the parity member in read operations is optional with FreeBSD's RAID3 implementation (graid3), but testing seems to show round-robin parity member reads offering little to no performance benefit with potentially severe performance decreases, so it doesn't seem to be a good idea for most settings.

Graid3 only allows array sizes of 2^n-1 - ie 3, 5, 9, and so on. This may be a significant handicap to those trying to squeeze the absolute most out of an array without breaking the bank.

RAID3 is extremely rare outside the FreeBSD world - I have neither seen nor heard of it in actual use other than with graid3 under FreeBSD.

RAID5: Stripe Set with Distributed Parity

RAID5 is extremely similar to RAID1, except that parity blocks are distributed among all member drives - where a RAID3 array with 5 member drives will write 4 blocks to members 1,2,3,4 and then a parity block to member 5, a RAID5 array with 5 member drives will instead write five blocks to members 1,2,3,4,5 and then a parity block to member 1 - and on the next cycle, will write five data blocks to 2,3,4,5,1 and then a parity block to member 2.

RAID5 and RAID3 are obviously extremely similar. Although RAID5 has dominated the industry as a whole for decades, there has been some controversy in recent years over their relative performance levels. We will test both types in this article.

FreeBSD does not currently support RAID5 at all. Even inexpensive "hardware" controllers such as the Nvidia onboard types (which use the CPU to XOR data blocks to generate parity and vice versa) are unsupported.

RAID1 Disk Performance

Gmirror, unfortunately, is not doing well at all at this time. 2-drive and 3-drive gmirror arrays performed grossly worse than even a single baseline drive, with a 5-drive gmirror managing to outperform the baseline 250GB drive tested but being handily beaten by the 500GB baseline drive, the Nvidia onboard RAID1 implementation, and especially the Linux RAID1 implementations, which handily dominated everything across the board.

Only results for gmirror's round-robin balance algorithm are shown here, because the load and split balance algorithms performed even more poorly than round-robin. Results for split are available as raw data if you click the image, but were not included on the graph itself. Load results are not available because initial testing showed it performing even worse than split and so the tests were not allowed to complete.

It is interesting to note that the Nvidia, Promise, and Linux RAID1 implementations all display a significant variation in how they handle simultaneous processes - all three exhibited differences up to 15, 30, and even 38 seconds in times to process otherwise identical simultaneously begun cp processes to /dev/null. While gmirror's sheer performance is abysmal, it is worth noting that it does at least handle processes consistently; it never finished processes more than a few hundred milliseconds apart.

The Promise TX-2300 RAID1 implementation was just plain poor, performing nearly as badly as gmirror but still failing to improve on the scores of the single baseline drive, while still turning in oddly inconsistent times as the vastly higher-performing arrays did.

The Gmirror and Linux implementations were the only ones tested which allowed RAID1 arrays with more than two member drives.

Complex Array Performance

Graid3 is doing noticeably better than gmirror. The 5-drive Graid3 implementation handily outperformed the 2-drive mirror across the board, and the 3-drive Graid3 implementation performed somewhat slower than the Linux or Nvidia 2-drive RAID1 arrays in the 2- and 3-process tests and significantly slower in the 4-process and 5-process tests, but nearly doubled their single-process performance.

Nvidia's RAID0+1 presents a compelling challenge, clearly outperforming the 5-drive graid3 array in the 3, 4, and 5 process tests - but equally significantly, it's roughly on par for the 2-process test and drastically slower on the single-process test, even getting outperformed by the 3-drive RAID3 array. We'll go into what that means for the real world more in the overall high performance section next.

Only results for graid3's default configuration are shown here, because the -r configuration (always use the parity member during reads) performed slightly to significantly poorer in all but the 5-process test, in which it performed only very slightly better. It is possible that a more massively parallel test (or a test of a much less contiguous filesystem) would show some advantage to -r, but for these tests, no advantage is apparent. Raw data is available on the image page itself.

Equipment

- FreeBSD 6.2-RELEASE (amd64)

- Ubuntu Server 7.04 (amd64)

- Athlon X2 5000+

- 2GB DDR2 SDRAM

- Nvidia nForce MCP51 SATA 300 onboard RAID controller

- Promise TX2300 SATA 300 RAID controller

- 3x Western Digital 250GB drives (WDC WD2500JS-22NCB1 10.02E02 SATA-300)

- 2x Western Digital 500GB drives (WDC WD5000AAKS-00YGA0 12.01C02 SATA-300)

Methodology

The read-ahead cache was changed from the default value of 8 to 128 for all tests performed, using sysctl -w vfs.read_max=128. Initial testing showed that dramatic performance increases occurred for all tested configurations, including baseline single-drive, with increases of vfs.read_max. The value of 128 was arrived at by continuing to double vfs.read_max until no further significant performance increase was to be seen (at vfs.read_max=256) and backing down to the last value tried.

Similarly, for the Linux tests read-ahead cache was changed from the default value of 256 to 4192, using hdparm /dev/md0 -a4096. Baseline drive performance was not tested under Linux, but extremely erratic initial test results on the first RAID1 configuration tested led me to googling Linux disk performance tweaking so as to make a completely fair comparison. The 4192 value was arrived at by successive doubling and testing until the highest performing value was found, then testing against 3/4 its value. The RAID1 array was created using the command mdadm --create /dev/md0 --level raid1 -n 5 --assume-clean /dev/sdb /dev/sdc /dev/sdd /dev/sde /dev/sdf, and subsequently shrunk to three members and then to two members as testing completed.

For the actual testing, 5 individual 3200MB files were created on each tested device or array using dd if=/dev/random bs=16m count=200 as random1.bin - random5.bin. These files were then cp'ed from the device or array to /dev/null. Elapsed times were generated by echoing a timestamp immediately before beginning the test and immediately at the end of each individual process, and subtracting the beginning timestamp from the last completed timestamp. Speeds shown are simply the amount of data in MB copied to /dev/null (3200, 6400, 9600, 12800, or 16000) divided by the total elapsed time.

Notes

The methodology used produces a very highly contiguous filesystem, which may skew results significantly higher than in some real-world settings - particularly in the single-process test. Presumably the multiple process copy tests would be much less affected by fragmentation in real-world filesystems, since by their nature they require a significant amount of drive seeks between blocks of the individual files being copied throughout the test.

In the 5-drive Graid3 array tested, the (significantly faster) 500GB drives were positioned as the last two elements of the array. This is significant particularly because this means the parity drive was noticeably faster than 3 of the 4 data drives in this configuration; some other testing on equipment not listed here leads me to believe that this had a favorable impact when using the -r configuration. There was not, however, enough of an improvement to make the -r results worth including on the graph.

Write performance was also tested on each of the devices and arrays listed and will be included in graphs at a later date (for now, raw data is available in the discussion page).

Googling "gmirror performance" and "gmirror slow" did not get me much of a return; just one other individual wondering why his gmirror was so abominably slow - so I reformatted the test system with 6.2-RELEASE (i386) and retested. Unfortunately, the gmirror results did not improve with the change of platform back to i386. It strikes me as very odd that graid3 with only 3 drives (therefore only 2 data drives) outperforms even a five-drive gmirror implementation. And in sharp contrast to gmirror, of course, the Linux kernel RAID1 results speak for themselves.